I grew up on the fringes of suburbia, at the cusp of what most would consider rural Pennsylvania.

In the Spring, we gagged from the stench of manure.

In the Fall, Schools closed on the first day of hunting season.

In the Winter, fresh, out-of-season fruits were missing from the grocer.

All year round, procuring exotic ingredients like avocados entailed a forty-five-minute drive toward civilization.

During the school year, I was the first to be picked up and last to be dropped off by the bus,

enduring a ride that was well over an hour each way.

I also grew up in tandem with the burgeoning cruise line industry.

My parents quickly adopted that mode of travel, and it eventually became our sole form of vacation.

As a kid, it was great: I had the freedom to roam the decks on my own, there were innumerable activities,

and an unlimited supply of delicious foods with exotic names like “Consommé Madrilene” and “Contrefilet of Prime

Beef Périgueux”.

My parents loved it because it kept their kids busy, was very affordable (relative to equivalent landed resorts),

and had sufficient calories to satisfy their growing boy’s voracious appetite.

This was also a transitional period when cruises were holding onto the vestiges and traditions of luxury ocean

liners. All cruise lines had strict dress codes, requiring formal attire some evenings (people brought tuxedos!).

The crew were largely Southern and Eastern European. As a kid who had never traveled outside North America, it felt

like I was LARPing as James Bond.

When I moved out of my parents’ house for college, I wanted a change of scenery.

While I had the option to move to a “college town”, I instead chose to live in a large city.

And I loved it.

It was like living on a cruise, all year round: Activities galore, amazing food, and all within a short distance of

each other.

A friend could call me up and say, ”Hey, we are hanging out at [X], would you like to join us?” And, regardless of

where X was in the city, I could meet them there within fifteen minutes either by walking, biking, or taking public

transport.

I didn’t need cruises anymore.

Preface

This year, for his birthday, my father only had one request: that he and his extended family take a cruise to

celebrate. I just returned from that cruise, and it was a very different experience from the ones of my youth.

As an adult who had by this point lived the majority of his life in large cities,

all I wanted to do was lay by a pool or, preferably, the beach all day and read a book.

Those are both difficult things when you’re sharing a crowded pool space with over six thousand other people,

and the ship doesn’t typically dock close to a good beach.

Skating rink? Gimmicky specialty restaurants? Luxury shopping spree? Laser tag? Pub trivia? Theater productions?

Comedy shows?

I can do all of those things any day of the week a short distance from my house.

When I vacation, I want to escape all that freneticism.

My wife, kids, and I were the only urbanites in our group, and my parents didn’t seem to comprehend why we

had such little interest in all the activities. And then it dawned on me: A cruise is just a simulated city.

Why would I want an artificial version of what I already have?

So, lying down on a deck chair, instead of reading my book, I started writing.

The idea of simulated urbanism isn’t new,

but it’s typically discussed in terms of curated amusement parks like Disney and the emergence of suburban

“lifestyle center” developments. Therefore, initially, my goal was to channel this insight into as obnoxious a

treatise as possible, in order to trigger my suburbanite traveling companions. Simulated urbanism? That

reminded me of Baudrillard!

I would make it a completely over-the-top academic analysis of the topic.

The following was compiled from a series of texts I sent to our group

chat throughout the cruise.

Abstract

This article explores the paradox of vacation preferences among suburban Americans who gravitate toward densely populated environments such as cruises, European cities, and amusement parks, despite residing in sparsely populated areas. It examines how cruises and lifestyle centers, which mimic urban density while offering suburban convenience, satisfy the suburban desire for urban-like experiences. The article contrasts these preferences with those of urban Americans, who experience density daily and may seek different vacation experiences. By analyzing the dynamics of suburban and urban vacation choices, we highlight the complex interplay between suburban living and the pursuit of urban escapism.

Simulated Urbanism

Simulacra and Simulation

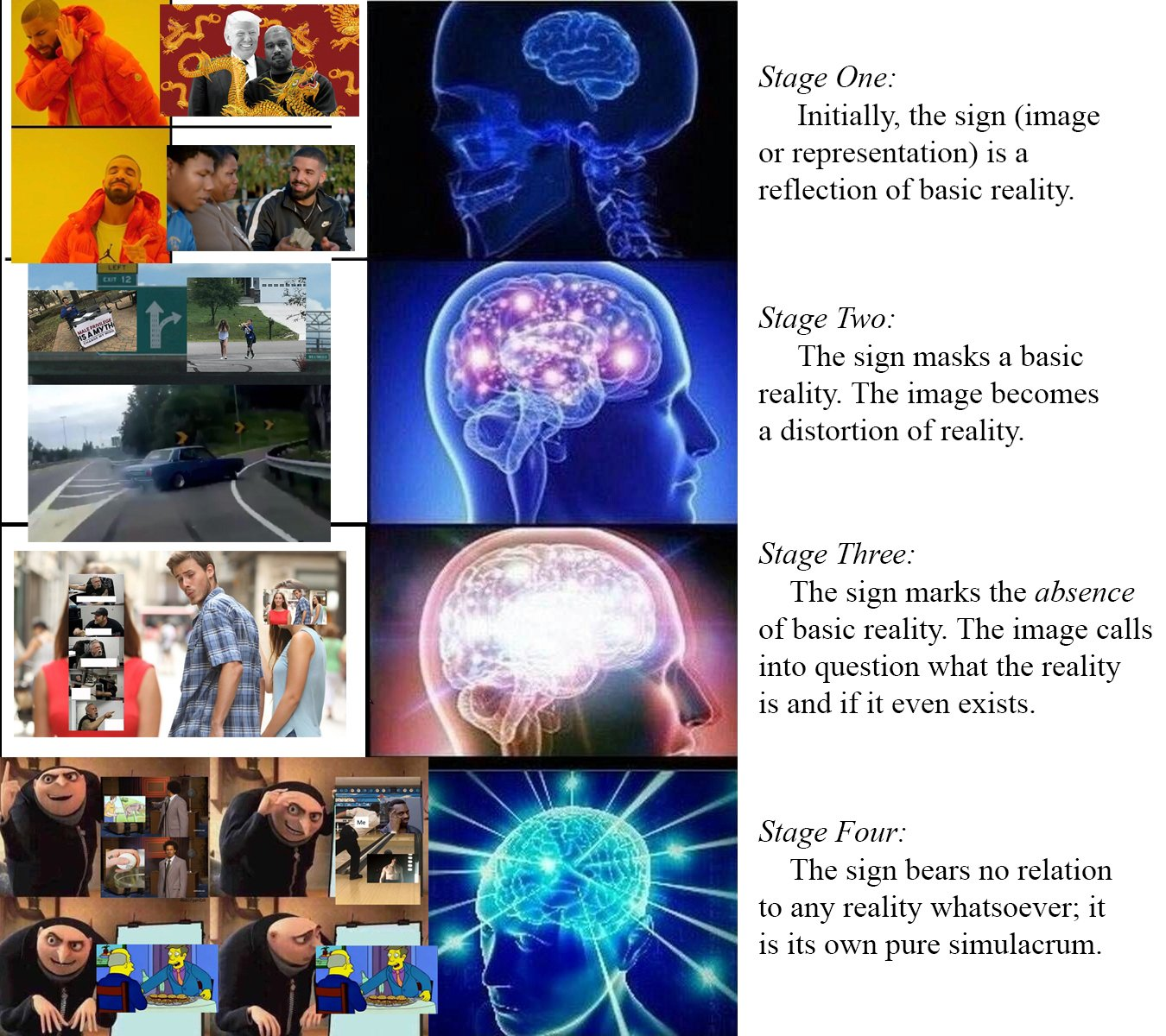

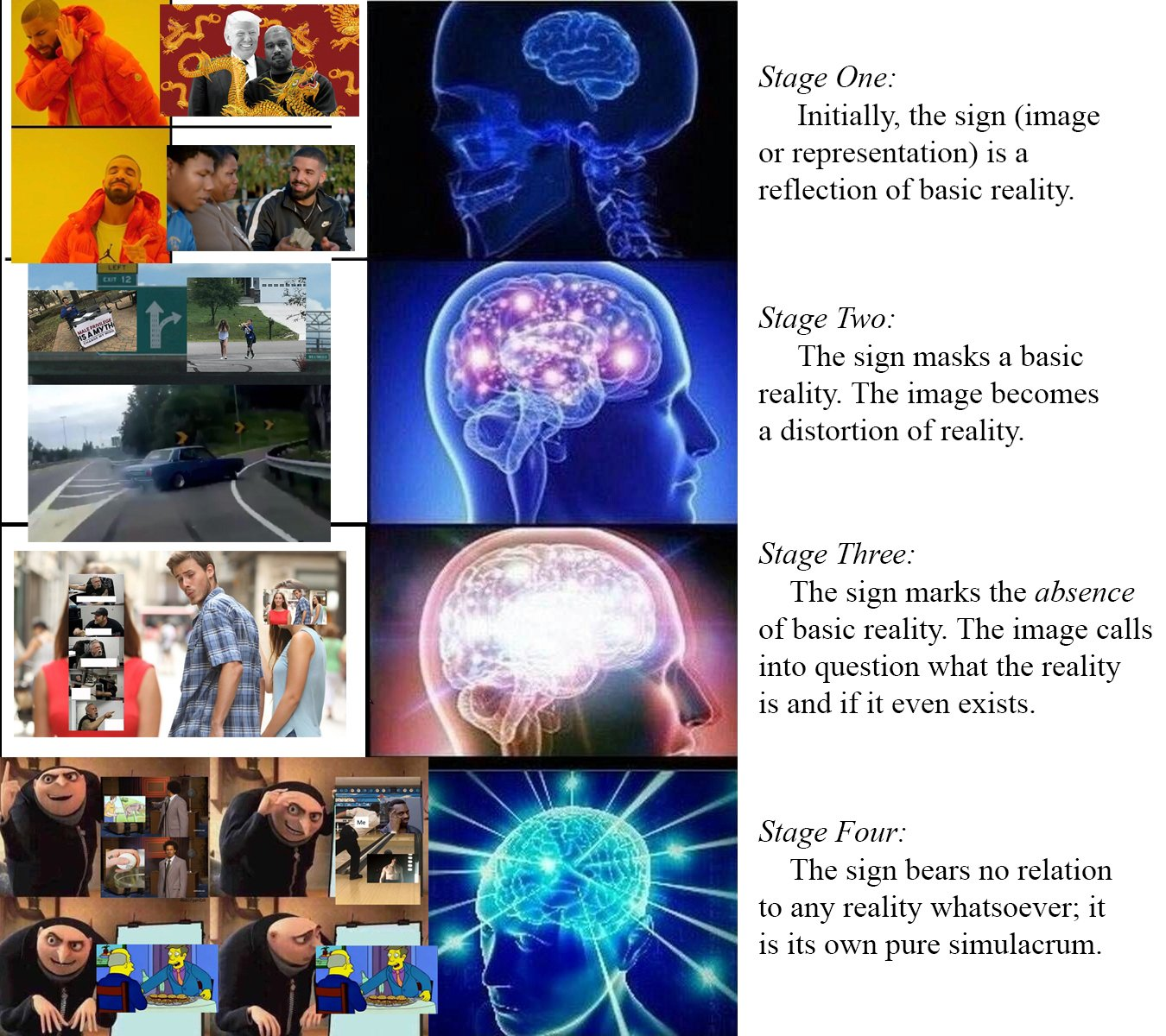

The concept of ”simulated urbanism,” as seen in cruise ships and lifestyle centers, can be analyzed through the lens

of Jean Baudrillard’s philosophy, particularly his ideas on simulation and hyperreality. Baudrillard argues that in

contemporary society, simulations—representations or imitations of reality—have become so pervasive that they blur the

distinction between what is real and what is artificially constructed. In the context of simulated urbanism, lifestyle

centers and cruise ships serve as hyperreal environments that imitate the vibrancy and density of urban life without the

complexities and inconveniences associated with real urban spaces. They provide an experience that is more accessible,

manageable, and, in some ways, more appealing than the genuine urban environments they emulate.

According to Baudrillard, these simulations can create a sense of hyperreality, where the imitation becomes more

influential or desirable than reality itself. Suburban Americans, who are accustomed to the sprawling, car-dependent

landscapes of suburbia, find in these simulated urban environments a curated and sanitized version of city life. This

artificial urbanism allows them to engage with the aesthetics and experiences of urban density—such as walkability,

diverse entertainment, and social interaction—without the perceived drawbacks of real urban living, like congestion,

pollution, and crime. Thus, simulated urbanism not only fulfills a desire for the excitement and dynamism of city life

but also constructs a hyperreal experience that is tailored to the preferences and comfort of suburban consumers,

aligning with Baudrillard’s notion of a society increasingly detached from the authenticity of the real world.

Metamodernism

Suburbanites’ predilections for cruising can be interpreted through the theoretical framework of metamodernism, which posits an oscillation between opposing cultural and experiential paradigms. The cruise experience encapsulates a synthesis of modernist aspirations for progress, order, and structured entertainment, alongside a postmodern sensibility characterized by irony and skepticism towards mass consumerism and the spectacle. This dialectical tension aligns with metamodernism’s core principle of navigating and reconciling contradictions. Within the confines of a cruise, suburbanites engage with the modernist ideal of escape and luxury, encountering a curated simulacrum that offers both adventure and novelty. Simultaneously, they remain cognizant of the inherent artifice and commodification intrinsic to the cruise experience, reflecting a postmodern consciousness of the limitations and constructed nature of such escapism.

Furthermore, the pragmatic idealism inherent in metamodernism is evident in the suburban pursuit of cruising, representing a quest for authenticity within a meticulously orchestrated environment. Suburbanites partake in cruising as a form of meaningful escapism that, although commodified, facilitates genuine opportunities for relaxation, social interaction, and cultural exploration. This dynamic reflects a metamodern synthesis of sincerity and irony, wherein participants seek genuine engagement and fulfillment while maintaining an awareness of the artificial and consumerist underpinnings of the cruise industry. The cruise, thus, serves as a microcosm of metamodern cultural hybridity, integrating diverse influences and experiences into a cohesive assemblage that enables suburbanites to navigate the complexities of contemporary existence with both optimism and reflexivity. This exemplifies the metamodern tension between the yearning for authentic connection and the recognition of its mediated nature, embodying a dialectical interplay that is central to metamodern thought.

Dialectics

(To be read as if dictated by Slavoj Žižek.)

From a Hegelian perspective, urbanites’ preferences for relaxing vacations can be understood through the lens of dialectical progression and the quest for synthesis between opposing elements in their lives. Hegel’s philosophy emphasizes the process of thesis, antithesis, and synthesis, [sniff] where the contradictions and tensions between different aspects of existence lead to the development of higher levels of understanding and being. A dance of contradictions and tensions.

Urbanites are like rats in a maze of their own making, living in this frenetic environment of constant stimulation and complexity. So, what do they do? They escape to a vacation that provides the antithesis of urban life, a necessary counterbalance that allows them to confront the contradictions of their daily existence.

In this dialectical process, the vacation can be seen as a moment of synthesis, where urbanites integrate the contrasting aspects of their existence—work and leisure, complexity and simplicity, stimulation and relaxation. Through this synthesis, they achieve a more harmonious state of being, temporarily resolving the contradictions inherent in their lives. [nose pull] Hegel might argue that this pursuit of balance is part of a broader process of self-realization and development, as individuals continually seek to reconcile opposing forces within themselves and their environments. This dialectical movement reflects a deeper philosophical journey toward self-awareness and fulfillment, where each vacation experience contributes to a more nuanced understanding of personal and existential needs.

Moreover, Hegel would likely emphasize that this process is not static but dynamic, as urbanites constantly redefine and re-evaluate their desires and experiences in pursuit of higher forms of understanding and contentment. [sniff] This ongoing dialectical interaction between urban life and vacation preferences underscores the complexity of human existence and the continuous evolution of individual consciousness within the broader historical and cultural context.

What Would Marx Say?

(For extra fun, to be read as if dictated by Jordan Peterson.)

When we examine the suburbanite’s preference for urban-like vacation experiences—such as cruising or visiting bustling cities—through the lens of Marxist theory, we uncover something quite profound about the alienation inherent in capitalist societies. Suburban life is often marked by routine and compartmentalization, a strong reliance on cars, and an emphasis on private space. This creates an environment of isolation and fragmentation, which Marx identified as symptomatic of capitalist production. The sprawling nature of suburbia physically separates individuals from their workplaces, social hubs, and cultural activities, generating a dichotomy between home life and community engagement. This alienation from collective social experiences mirrors the detachment workers feel from the products of their labor and from each other in a capitalist framework.

Thus, when suburbanites seek out vacations in dense, walkable environments, they’re searching for a temporary reprieve from this isolation. These vacations allow them to engage with the social interactions and cultural experiences that are often missing from their everyday lives. In this way, vacations become a commodified form of leisure within capitalist society, packaged and sold as products designed to alleviate the stresses of alienation and labor. Suburbanites, who feel the pressures of maintaining a lifestyle dictated by capitalist norms—like homeownership and consumerism—turn to vacations as a form of respite from these demands. Cruises and urban vacations offer a concentrated form of entertainment and cultural engagement, providing an illusion of freedom and choice that contrasts with the regulated and constrained nature of suburban life. While these vacations temporarily satisfy the need for genuine human connection and cultural enrichment, Marxist theory would argue that they also reinforce the capitalist cycle, as individuals must return to their suburban routines to earn the means to participate in such leisure activities. Thus, vacations, though they offer a brief escape from alienation, ultimately underscore the pervasive influence of capitalism on leisure and personal fulfillment.

Conclusions

As I reflect on the whirlwind of contradictions, philosophies, and cultural dynamics explored in this article, I must

admit that I find myself utterly exhausted. Much like the aftermath of a cruise, where the buffet line feels both

endless and insurmountable, I am too fatigued to draw any tidy conclusions. So, dear reader, as I lean back in my

metaphorical deck chair, I invite you to navigate these intellectual waters and draw your own conclusions. Like the

towel animals on your cabin bed, reality might be folded and shaped in surprising ways, but the essence remains

yours to unravel. Bon voyage in your own journey of trolling! Or is it trawling?

This is an excerpt from the

This is an excerpt from the